The LLM API Gateway Built for Developers

One endpoint for all major models — OpenAI, Anthropic, Google, Mistral, and more. Smart routing, real-time cost tracking, and automatic failover. Pay with WeChat, Alipay, or Stripe. Native support for Claude Code and Codex.

- 20+ models from OpenAI, Anthropic, Google, Mistral, and DeepSeek

- One OpenAI-compatible endpoint: api.router.one/v1

- WeChat Pay, Alipay, Stripe, USDT — no US credit card required

- Native Claude Code and Codex CLI integration in under 2 minutes

Connect Claude Code & Codex in 2 Minutes

Change one config file and start using Claude Code and Codex through Router One.

Stable & Reliable

Independent API keys and deployment — no account bans or rate limits

Lower Cost

Cheaper than direct access, precise per-token billing

Usage Tracking

Real-time visibility into token usage and cost per request

# Claude Code → Route through Router One

ANTHROPIC_BASE_URL="https://api.router.one"

ANTHROPIC_API_KEY="sk-xxx"

ANTHROPIC_AUTH_TOKEN="sk-xxx"Why Router One

Stable, easy, secure, hassle-free — focus on building, not infrastructure.

Stable Access

Reliable, consistent API access for Claude Code and OpenAI Codex. Independent deployment, immune to upstream bans and rate limits.

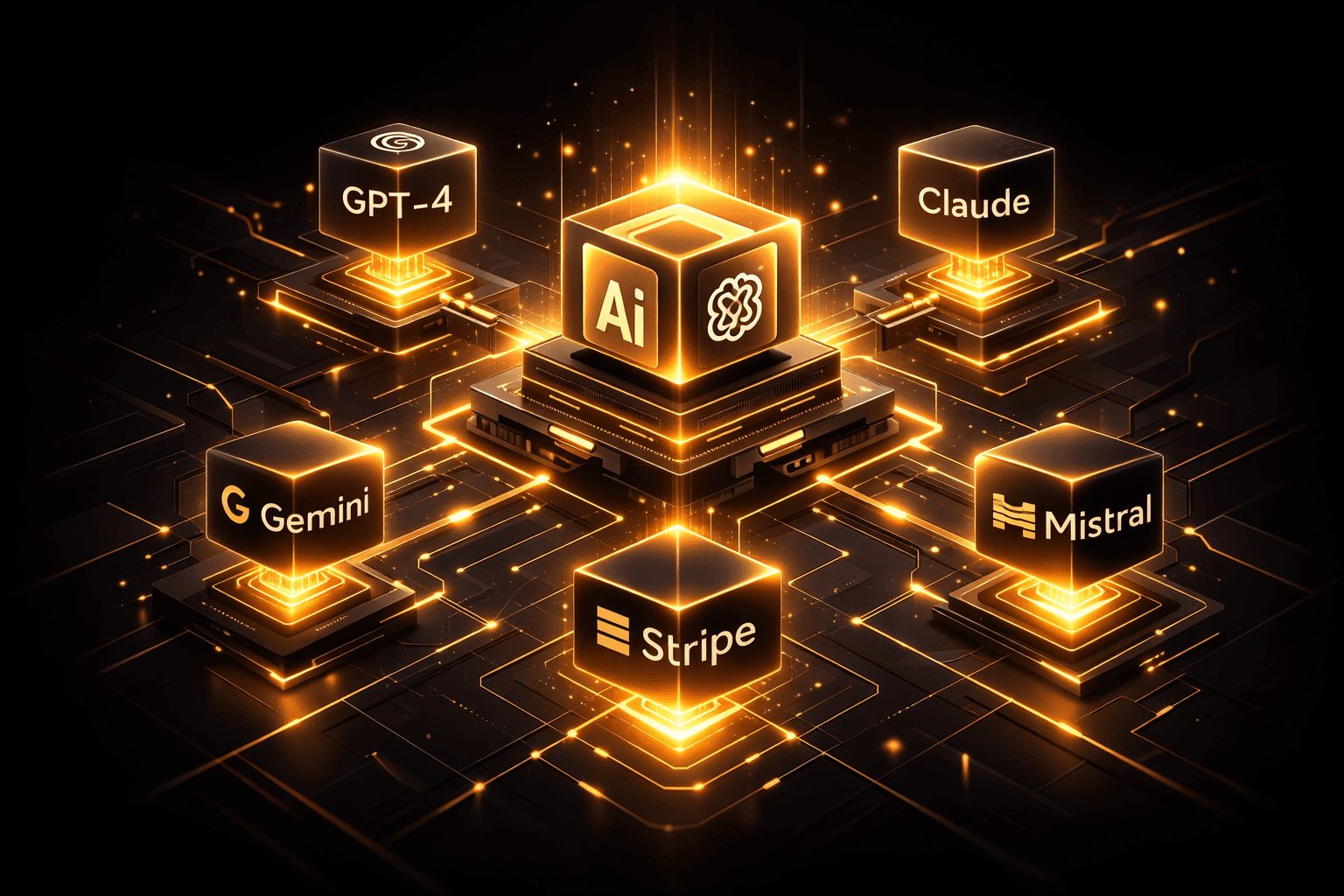

Aggregated API

One endpoint for OpenAI, Anthropic, Google, and more. Standardized interface — no juggling multiple SDKs.

Secure & Transparent

Independent API key management, real-time request processing with no data retention. Full usage monitoring and cost tracking.

Flexible Payment

Pay with WeChat Pay, Alipay, Stripe, or crypto. Use wallet top-ups, optional subscriptions, and token-metered billing together.

Smart Routing, Built In

Don't just call models — route them intelligently. Router One picks the optimal path for every request based on your priorities.

Auto Fallback

If a provider goes down, traffic reroutes instantly to a healthy one. Zero downtime, zero manual intervention.

Cost Optimization

Route by cost weight to always hit the cheapest provider for the same model. Cut spend without changing a line of code.

Latency-Aware

Latency-first routing sends each request to the fastest available endpoint, measured in real time.

Quality Scoring

Assign quality weights to balance between cost, speed, and output quality — per project, per model.

How routing decides

Each request scores candidate providers on EWMA latency over the last 50 calls, per-model cost markup, and a rolling 5xx error rate. The highest-scoring provider is invoked; on a 5xx response or timeout, fallback fires within 200ms.

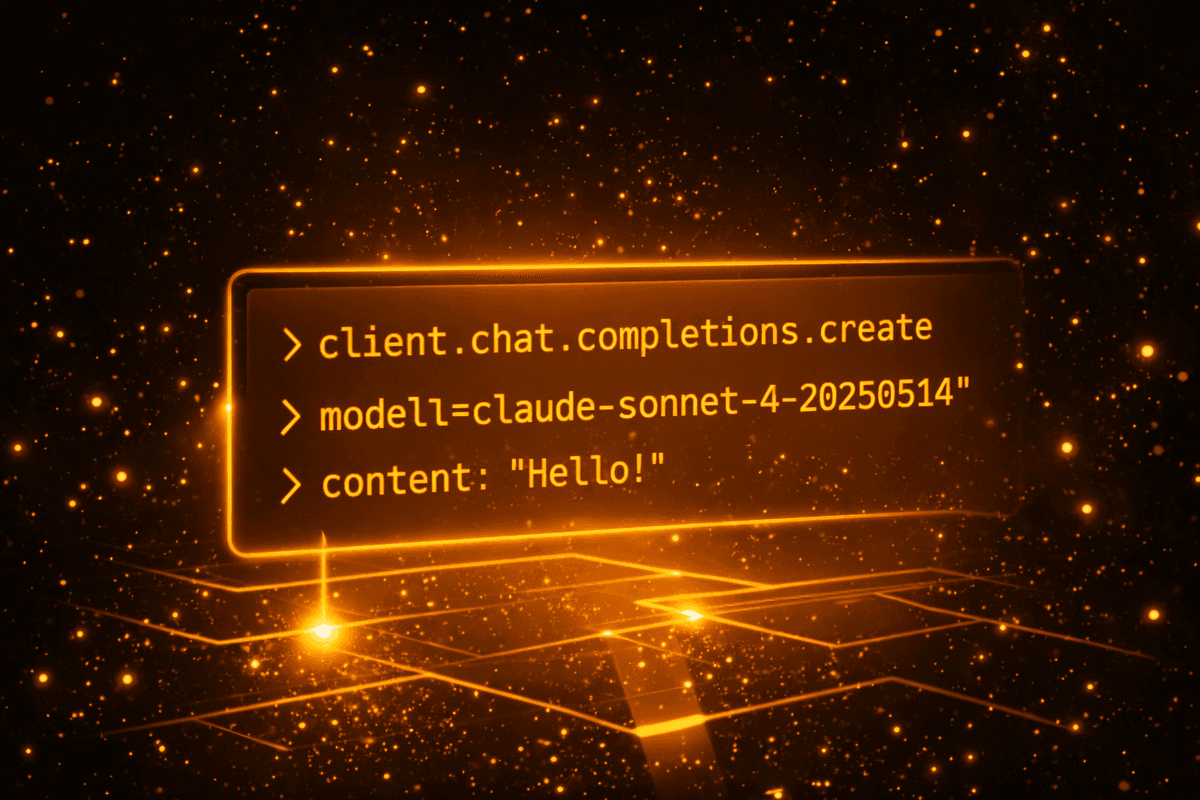

A Few Lines of Code to Get Started

Whether calling the aggregated API or configuring AI coding tools — it only takes a few lines.

from openai import OpenAI

client = OpenAI(

base_url="https://api.router.one/v1",

api_key="sk-xxx"

)

response = client.chat.completions.create(

model="claude-sonnet-4-20250514",

messages=[{"role": "user", "content": "Hello!"}]

)

# Works with any OpenAI-compatible SDKSubscription Plans

Predictable monthly credits, unlimited model access, and sitewide discounts.

Monthly credits, unlimited model access, and sitewide discounts.

Explore Router One

Detailed guides and landing pages for the specific problems we solve.

Ready to Simplify Your AI Infrastructure?

Stop juggling multiple API keys, worrying about provider outages, and losing track of costs. Router One gives you one endpoint, smart routing, and full visibility — set up in minutes, pay only for what you use.